AI-based video production can now generate stories with realistic graphics and action-packed scenes in a short time. One of the most important challenges for creators is retaining the same characters across clips. Paperwork is known to break the visual continuity and reduce the audience’s immersion. Seedance AI is a hybrid of Pippit and advanced generation models and workflows that address this issue. It helps maintain identity, style, and consistent movement across a series of scenes, and simplifies scalable video production.

Understanding Character Consistency in AI Videos

Character consistency is the visual consistency of the same character in every scene that is created. It includes uniform facial features, clothes, proportions, and styling. This uniformity increases transparency in telling the story and credibility with the audience. The lack of consistency in graphics undermines immersion and lowers the quality of the content. The AI video generator plays a vital role in automating this consistency, reducing the work required by humans, and increasing creativity. Traditional workflows often require rework multiple times, which delays production and introduces errors in multi-scene storytelling projects at an unbelievable scale.

Seedance AI Features for Consistent Characters

Seedance AI also offers high-quality tools that ensure consistent character performance across scenes. It uses reference images, motion tracking, and timely intelligence in keeping identity in video generation. Multi-shot consistency is a characteristic that makes characters appear similar across transitions and angles. Stable rendering guarantees that there are no undesirable changes in face or clothing between frames. The improved, timely interpretation allows a more adequate alignment of user instructions with the generated output. Pippit is compatible with Seedance, which ensures scalability in the manufacturing of regular character-driven storytelling projects across different video formats.

Leveraging Advanced Models for Better Results

The advanced models increase the realism of video, movement, and scene continuity in production processes. Dreamina Seedance 2.0 is more detailed and enables longer film sequences with enhanced lighting and textures. It gives it a more fluid transition between shots and a greater stability of figures in complicated scenes. The result of this edition is a quality that is production-ready and can be used for professional storytelling. The free AI video editor is open-source and embedded in Pippit to allow creators to enhance outputs without specialized knowledge, perform quicker corrections, and achieve more precise and effective video refinements across numerous platforms.

Steps to Build AI Videos With Consistent Characters Using Seedance AI Tools

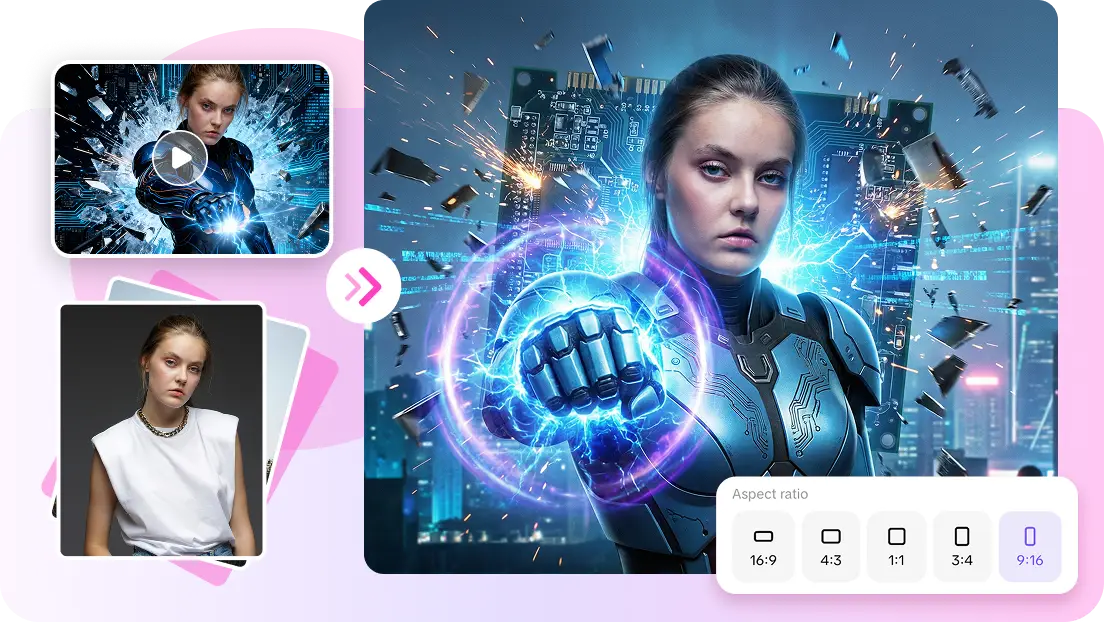

Step 1: Set up consistent character creation

-

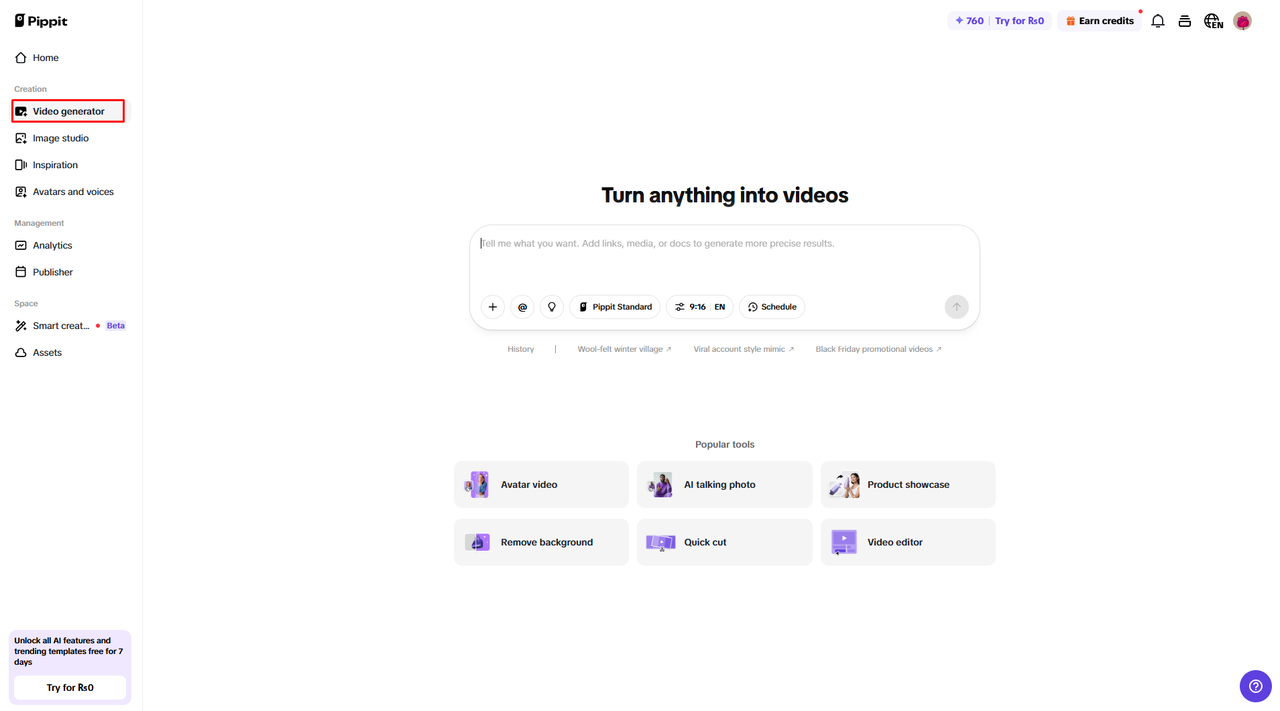

Sign up for Pippit and access the platform.

-

Navigate to the “Video generator” tab from the dashboard.

-

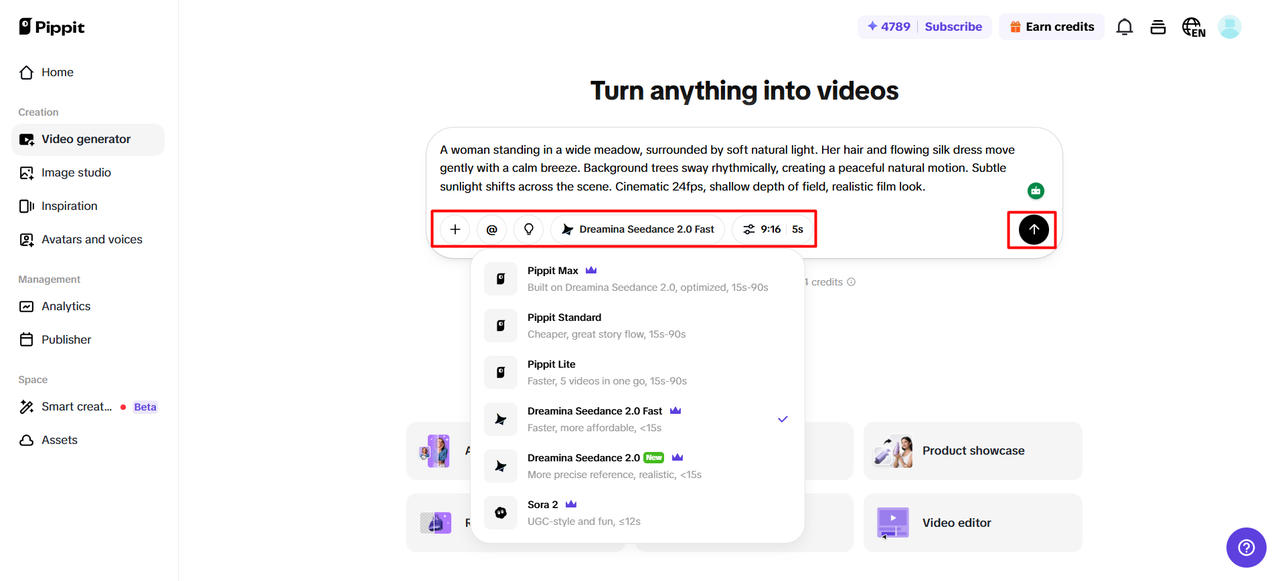

Select an AI model like Dreamina Seedance 2.0, Dreamina Seedance 2.0 Fast, Sora 2, Pippit Standard, Pippit Max, or Pippit Lite.

-

Write a detailed prompt describing your character and scene consistency.

-

Choose video length, language, subtitles, and aspect ratio.

-

Click “+” to upload reference images or videos for character consistency.

-

Click “Generate”.

Step 2: Generate character-consistent videos

-

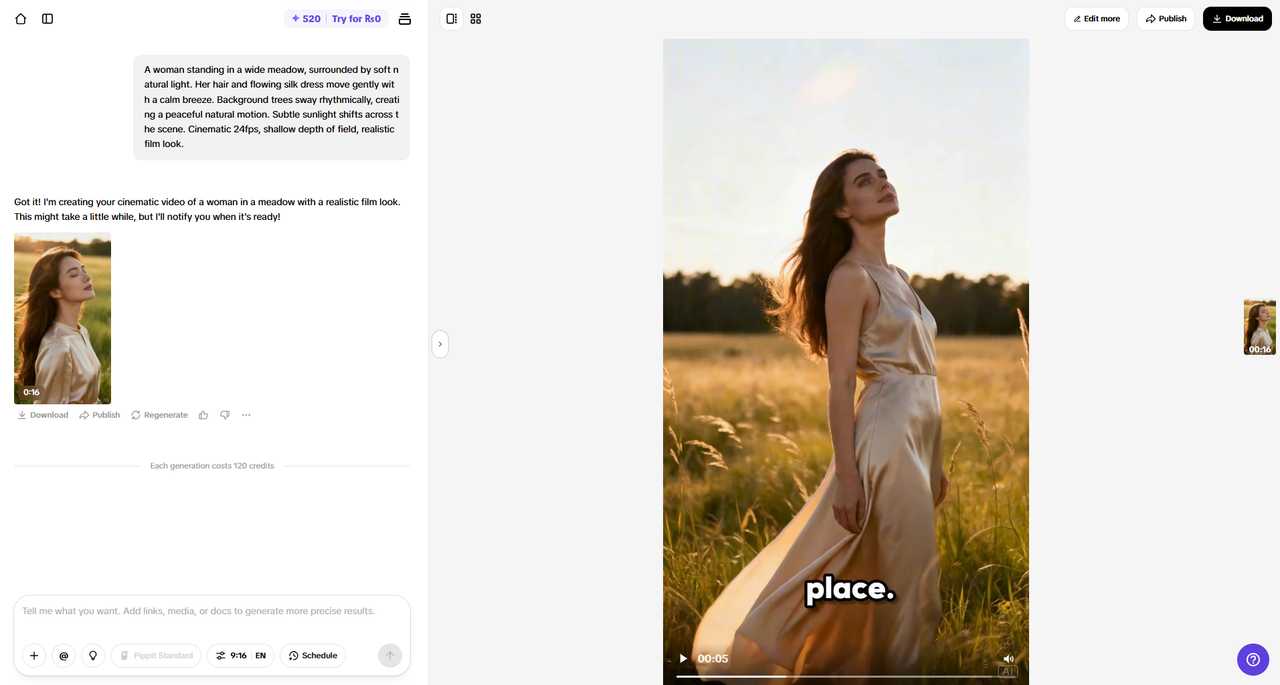

After clicking “Generate”, the AI creates your video with consistent visuals.

-

It manages transitions, captions, avatars, pacing, voice, lyrics, and enhancements.

-

You will receive a video draft to review

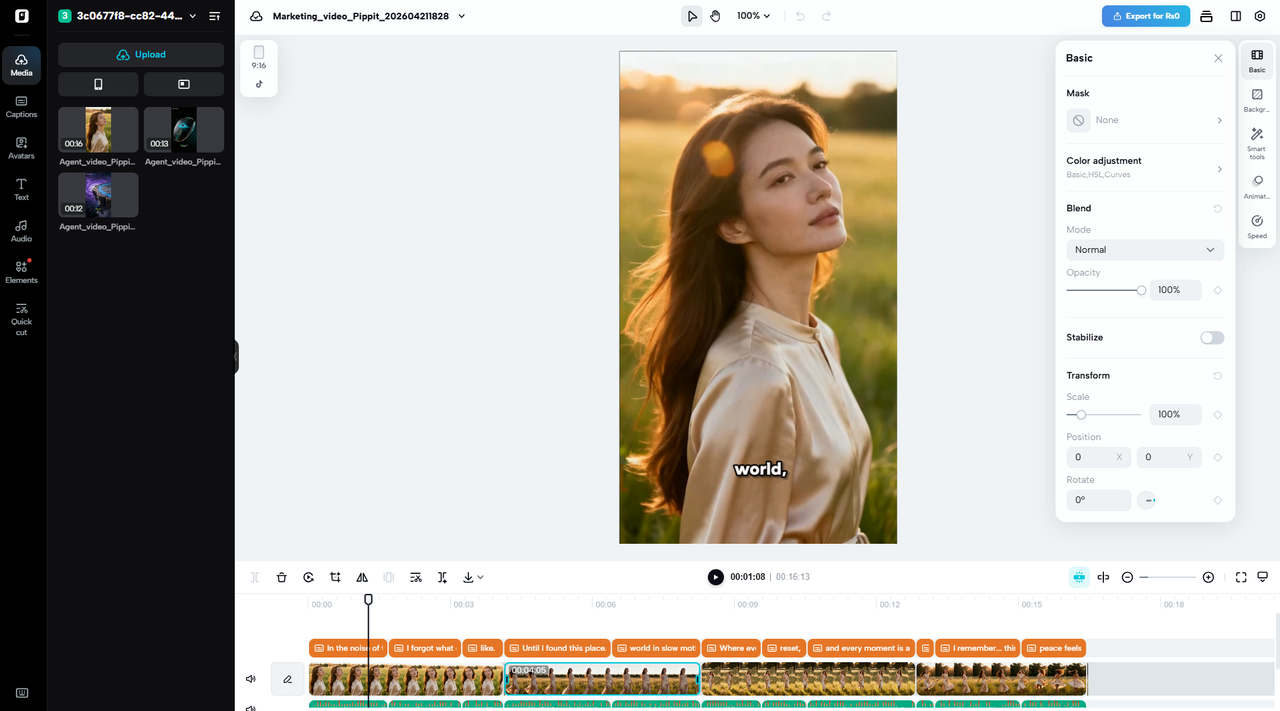

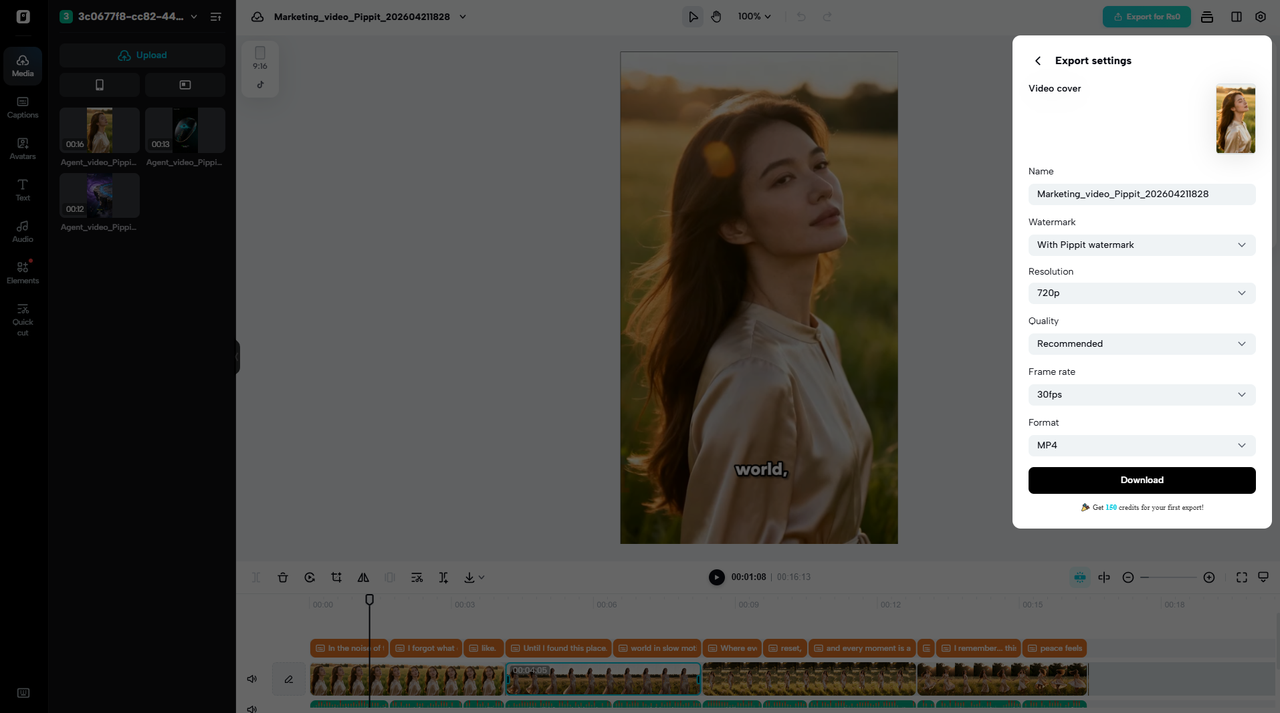

Step 3: Edit and export with precision

-

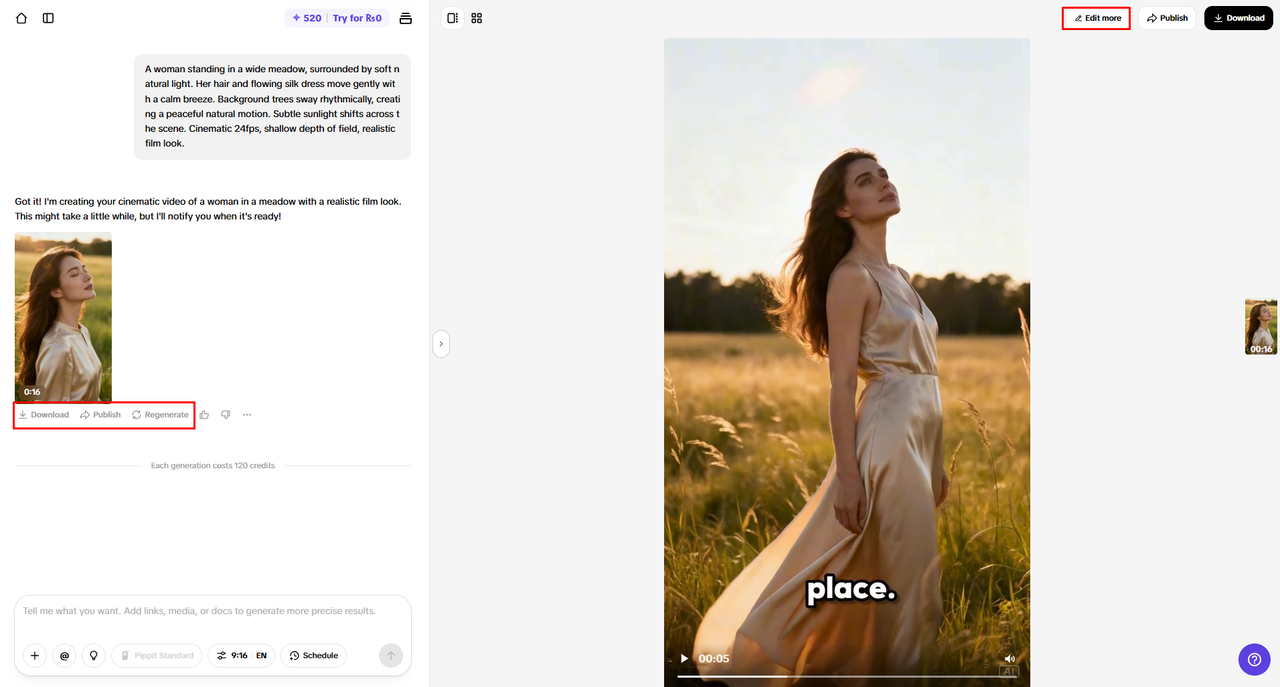

Click “Download” or use “Regenerate”. Open “Edit more” for customization.

-

Modify captions, colors, alignment, and effects.

-

Add music and refine visuals.

-

Click “Export” to complete.

-

Select “Publish” or “Download” with chosen settings.

Key Factors for Maintaining Character Consistency

Coherence of character is founded on diverse technical and creative deliberations in generation. The reference resources guide the AI towards appropriate visual replication of scenes. Fine-tuning enhances precision in characterization and reduces variation error. Scene continuity refers to the shots in a logical order that does not disturb the image. The styling is used consistently so that the colors, textures, and lighting do not change throughout the video. Refinement via iteration helps refine outputs by altering prompts and rerunning them to better fit creative intent. This combination is very reliable in the production processes of multi-scene AI.

-

Reference Asset Usage improves the visual accuracy of generated scenes.

-

Detailed Prompting increases Identity consistency and reduces variation.

-

Scene Continuity Control ensures that the storytelling remains continuous between clips.

-

Consistent Styling is unified colors, light, and textures.

-

Iterative Refinement enhances quality through timely adjustments.

Scaling Character-Based Content With Pippit

Pippit AI workflows are structured to facilitate scaling character-based content. Manufacturers can create entire sets of videos and maintain a consistent character identity across episodes. This reduces manual corrections and increases production efficiency. Prompts and reference assets are reusable, enabling quick generation cycles. In a series of video campaigns, it is easier to be consistent in branding. Pippit enables producers to create a great deal of content without sacrificing the quality of narration or visual coherence. It helps to add content needs without complicating production or placing a huge burden on editing. Single creators and production teams can use it because it is scalable.

Conclusion

Frequent characters improve the narration and increase viewers’ attention to the video content. The tools that involve artificial intelligence simplify the process of dealing with complex production problems and offer visual uniformity among scenes. Together, Seedance AI and Pippit offer scalable video-generating workflows. They help creators to create high-quality content with less effort and professionalism. This integration improves branding, storytelling, and the efficiency of modern AI-powered video generation across platforms and content types. It also supports sustainable content creation without sacrificing creative control or visual integrity.